How Ruflo Turns Claude Code Into a Multi-Agent Platform With Memory, Swarms, and Federation

Trending, MIT-licensed: HNSW vector memory, 32 Claude Code plugins, and a 300+ MCP tool surface.

Ruflo sells a “nervous system” for Claude Code: 100+ agents, 300+ MCP tools, swarm consensus, self-learning neural routes.

The project’s own May 3 self-audit puts ~195 of ~240 tools in the “real code” column.

What works: memory, embeddings, task, claims, daa, workflow scheduling, most swarm and agent tools.

What’s stubbed: agent execution, hive-mind transport, workflow runtime, WASM agent, neural prediction.

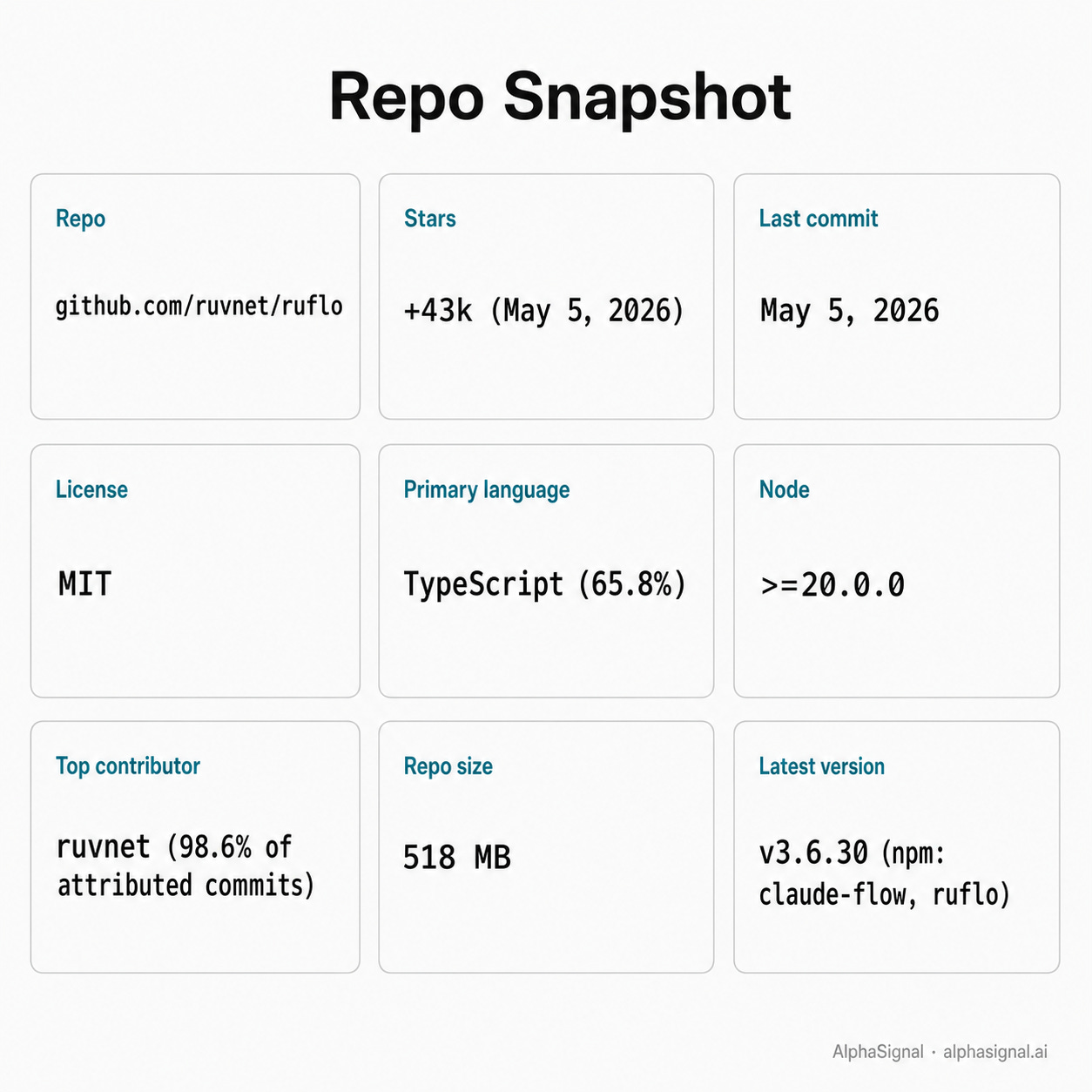

Repo Snapshot

Context

Ruflo is built by ruvnet (rUv on social). It originally shipped as Claude Flow and was renamed at v3.5.0 in February 2026, after 5,800+ commits and 55 alpha iterations.

The npm registry carries the same codebase under two names, claude-flow and ruflo, both at v3.6.30, with roughly 52,000 combined weekly downloads.

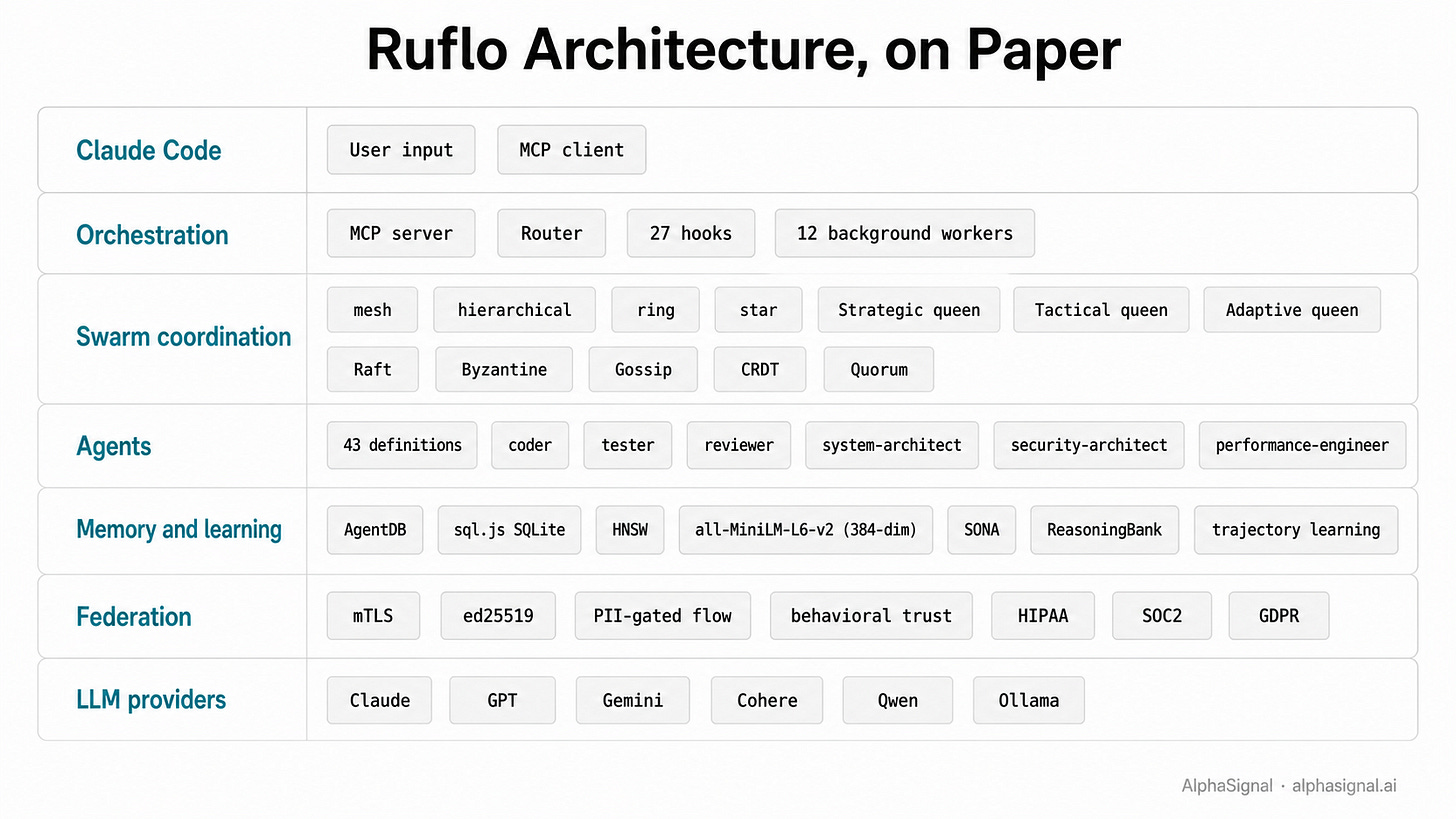

Architecture, on Paper

The README presents Ruflo as a layered runtime sitting between Claude Code and the LLM providers. One init command is claimed to wire all of it.

Ruflo ships 49 top-level commands, 32 Claude Code plugins, and a 300+ MCP tool surface exposed to Claude. Plugins group into eight README categories: core, memory, intelligence, code quality, security, architecture, DevOps, and domain-specific (with ruflo-iot-cognitum for IoT and ruflo-neural-trader for trading on the long tail).

Orchestration

An MCP server exposes the tool surface, a router decides which tool fires for each Claude request, and 27 hooks intercept tool calls to store context and trigger 12 background workers (audit, optimize, testgaps, and similar). The hooks are the surface Ruflo describes as session-to-session learning.

Swarm coordination

Agents are described as organizing into one of four topologies: mesh, hierarchical, ring, or star. A hive-mind mode adds three queen types (Strategic, Tactical, Adaptive) and eight worker roles. Five consensus protocols ship by name: Raft, Byzantine, Gossip, CRDT, and Quorum.

Agents

43 agent definitions ship as markdown files under .claude/agents/, covering coder, tester, reviewer, system-architect, security-architect, performance-engineer, and domain roles. The README counts these plus dynamic spawning as “100+ specialized agents.”

Memory and learning

AgentDB handles vector storage on SQLite, with HNSW indexing on 384-dim embeddings via all-MiniLM-L6-v2. The self-learning surface adds SONA (Self-Optimizing Neural Adapter), ReasoningBank, and trajectory learning. Sessions persist via JSON key-value snapshots.

Federation

Cross-machine agent communication is described as mTLS plus ed25519 signatures, with a PII-gated data flow, behavioral trust scoring, and compliance audit trails marketed for HIPAA, SOC2, and GDPR.

LLM providers

Smart routing across Claude, GPT, Gemini, Cohere, Qwen, and Ollama, with failover. The ruvLLM plugin is described as routing to local LoRA adapters via SONA for offline use.

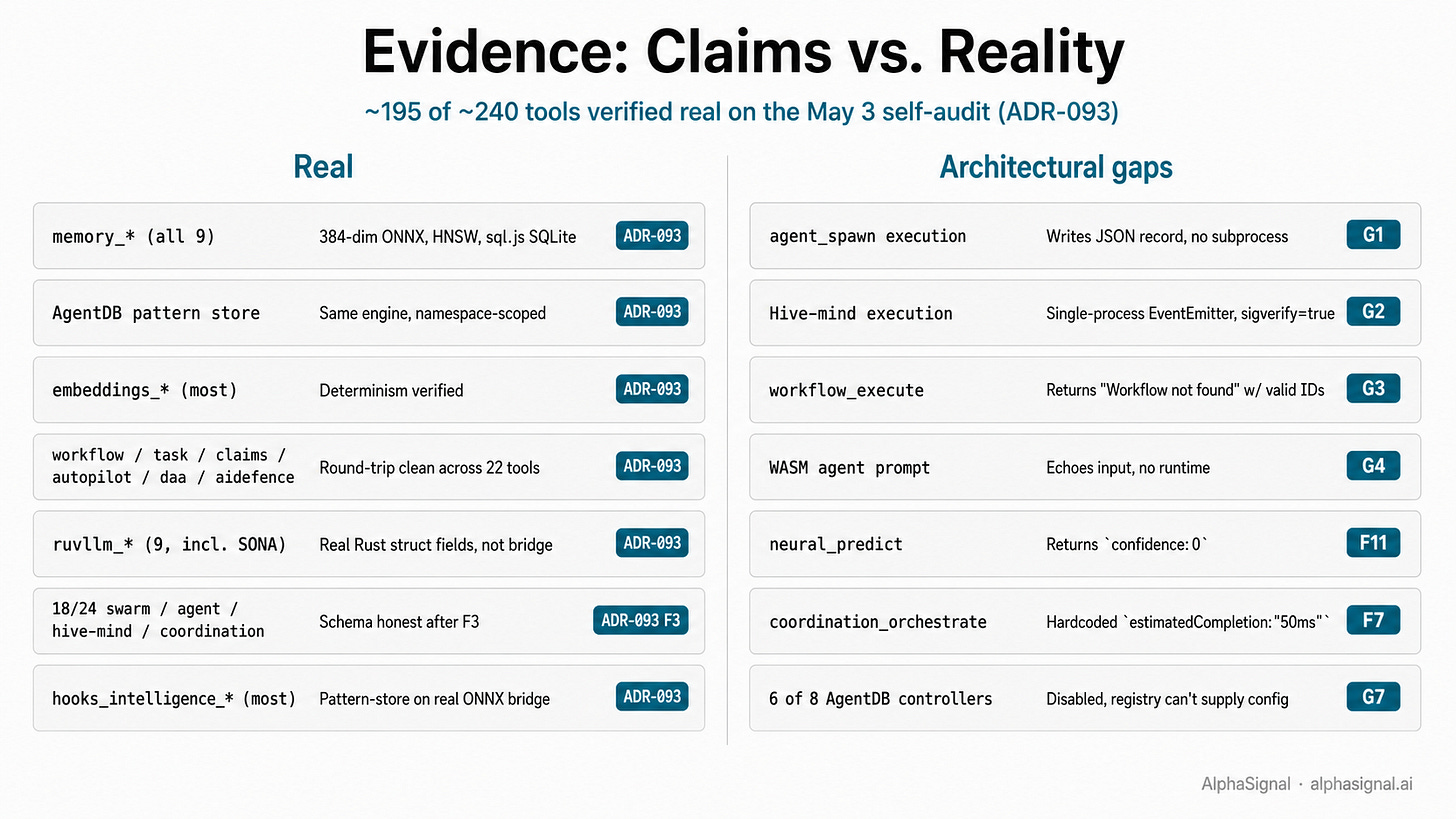

Evidence: Claims vs. Reality (yet)

“Every classification below traces to the public roman-rr audit gist, ADR-093, or ADR-095. The article’s findings are reproductions of the project’s own published documentation, not independent test results”

The April audit by roman-rr (v3.5.51) flagged ~290 of 300+ tools as stubs. One month and nine ADRs later, Ruflo’s own May 3 verification swarm (ADR-093) re-checked the running server and the picture inverted.

ADR-085 confirmed the historical await sleep(352) baseline and the += 100 hardcoded token-savings figures. ADR-093 fixed the contract honesty: no more silent “completed” lies, schemas that round-trip what callers pass. The execution-layer holes that remain are tracked in ADR-095 as G1 through G7.

On the May 3 self-audit, ~195 of ~240 tools verified real. The gaps are architectural, not surface-wide.

How to Get Started

The README ships three install paths, 49 top-level commands, and 32 plugins. Most surfaces run real code, with specific execution-layer gaps tracked in ADR-095 (see Step 5). The minimal path that delivers value is install, verify, then use the memory layer.

Step 1: Install

# One-line install

curl -fsSL https://cdn.jsdelivr.net/gh/ruvnet/ruflo@main/scripts/install.sh | bash

# Or via npx (interactive setup)

npx ruflo@latest init wizardThe wizard installs the plugin set, registers the Claude Code hooks, and writes config under .claude/ and .swarm/ in the current project. About 30 to 60 seconds on a clean project.

or

/plugin marketplace add ruvnet/ruflo

/plugin install ruflo-core@rufloThe Claude Code plugin path. Installs into Claude Code itself rather than a project directory. Useful if Ruflo should be available across every repo Claude Code touches.

claude mcp add ruflo -- npx -y @claude-flow/cli@latestThe MCP server path. Lightest install. Exposes only the MCP tool surface to Claude Code, which is the right scope for using the working memory and embeddings tools and ignoring the rest.

Step 2: Verify the install

ruflo verifyCryptographic verification of the installed bytes against the published manifest. v3.6.x added this in response to issue #1375, where versions 3.1.0-alpha.55 through 3.5.2 shipped an obfuscated preinstall script that walked ~/.npm/_npx/ and deleted directories. Run it on every fresh install.

If the install reports problems:

ruflo doctor --fixChecks Node 20+, npm 9+, the daemon, the memory database, MCP servers, and disk space. Apply suggested fixes one at a time rather than blanket-accepting.

Step 3: Store and search memory

This is the part the audit confirmed runs real code. HNSW-indexed embeddings, SQLite persistence, namespace-scoped storage.

Store a pattern:

npx ruflo@latest memory store --key “pattern-auth” --value “JWT authentication with refresh tokens”Search it back semantically:

npx ruflo@latest memory search --query “authentication patterns”The store generates 384-dim embeddings via all-MiniLM-L6-v2 and indexes them with HNSW. Search returns ranked hits by cosine similarity. List entries in a namespace with memory list --namespace patterns.

Step 4: Use it inside Claude Code

Once Ruflo is registered as an MCP server, the same memory surface appears as MCP tools the Claude Code agent calls directly: memory_store, memory_search, memory_retrieve. The agent can store learnings during one session and retrieve them in the next without the user repeating context.

Pair it with the embeddings tools (embeddings_generate, embeddings_compare) for ad-hoc semantic comparison and the session tools (session_save, session_restore) for persistence across context windows.

Step 5: Skip these surfaces

The following surfaces still have execution-layer gaps. They will not error loudly, but they will not deliver the coordination, training, or runtime the README implies:

ruflo swarm * and ruflo hive-mind *. Schemas honest, execution still single-process (ADR-095 G2)

ruflo neural train and ruflo neural predict. neural_predict returns confidence:0 (F11)

ruflo workflow execute. Returns “Workflow not found” with valid IDs (G3)

ruflo agent spawn. Writes a JSON record, no subprocess (G1)

The auto-memory hook also injects ~5,706 entries with ~20 unique into every Claude message (ADR-095 G6). ruflo doctor --fix does not yet dedupe this. The fix is tracked as a follow-up ADR.

Compared To

Claude Code native is the baseline Ruflo claims to extend. The working subset (HNSW memory, embeddings, task, claims, session tools) adds capability Claude Code lacks. The hive-mind execution layer is still single-process per ADR-095 G2, so cross-machine swarm coordination does not yet work.

CrewAI is the closest open-source peer for explicit multi-agent orchestration. CrewAI is Python and role-based, with no built-in vector memory. The honest trade-off: CrewAI runs the coordination it advertises.

Current Limitations

Agent execution is unwired. agent_spawn writes a JSON record into an in-memory Map. Provider classes exist but the agent / task / swarm code paths do not import them (ADR-095 G1).

Hive-mind is single-process. EventEmitter-based, no inter-node transport. The consensus parameter round-trips after ADR-093 F3, but the handler underneath has no sockets or distributed protocol (ADR-095 G2).

Workflow execution lacks a runtime. workflow_execute returns “Workflow not found” even with valid stored workflows. No executor walks the dependency graph (ADR-095 G3).

WASM agent echoes input. No runtime, no LLM call. Returns the input prefixed with “echo:” (ADR-095 G4).

Auto-memory injects 5,706 entries with ~20 unique into every Claude message. Trigram Jaccard graph instead of the 384-dim embeddings used elsewhere. PageRank uniform across nodes, meaningless at the graph’s density (ADR-095 G6).

MCP tool descriptions don’t always differentiate from native Claude Code tools. Issue #1748 #4 found 237 of 300 descriptions don’t tell Claude when to use Ruflo’s version over native (Bash, Read, Grep, Glob). v3.6.30 sharpened 7. The remaining 230 fall through to native, so Ruflo’s tools fire less often than the install suggests.

Benchmark numbers are synthesized. simulate_benchmarks.py generates results with random.uniform(-0.05, 0.05) against hardcoded base rates. Ruflo does not appear on the official SWE-bench leaderboard.

Active remediation. Between April 4 and May 4, the project shipped nine ADRs (088 plus 092 through 099). Encryption at rest is implemented in four phases with 76 new tests (ADR-096). The architectural gaps above are the open items.

So the best recommendation is to use the working subset and watch the remediation track.

AlphaSignal Take

The README sells a self-organizing swarm with consensus and neural routing. The May 3 self-audit puts ~195 of ~240 tools in the real column. The remaining gaps are architectural, not surface-wide: agent execution is unwired, hive-mind transport is single-process, workflow runtime is absent, WASM agent echoes input.

The remediation cycle is still active. v3.6.28 through v3.6.30 shipped today (5 May), including runtime-honesty fixes (the unverified “2.49× Flash Attention” recommendation was retagged as “in progress”) and the plugin-install hook layout fix that had been loading zero hooks before. Nine ADRs in 30 days, encryption at rest with 76 tests, and an Ed25519-signed witness manifest covering 55 verified fixes.

Worth Watching. Use the memory, embeddings, task, and claims tools today. The architectural gaps are real but tracked. Re-evaluate when v3.7 ships the agent-execution wire (ADR-095 G1) and per-controller activations (G7).

Who Benefits

Claude Code users who want a drop-in HNSW memory layer with persistent embeddings, teams testing semantic-search workflows on top of Claude, developers who want one MIT-licensed install for embeddings, agent definitions, and Claude Code hooks.

Who Doesn’t

Anyone counting on Byzantine consensus, multi-machine swarm coordination, end-to-end agent execution, or the workflow runtime for production work, teams that need verified benchmark numbers before adopting, engineers who would rather assemble lightweight pieces (a vector store plus a hook) than ship 518 MB.

Practitioner Implication

Ruflo is usable today for memory, embeddings, task, and claims management on top of Claude Code. The multi-agent swarm runtime its README describes does not yet execute end-to-end.

Links

github.com/ruvnet/ruflo (repo, ~5 min skim)

ADR-093 May audit remediation (project’s six-agent verification swarm, ~10 min)

ADR-095 architectural gaps (G1–G7 tracking, ~5 min)

roman-rr audit gist (April audit that triggered the remediation, ~15 min)

Follow @AlphaSignalAI for more content like this.

Check out AlphaSignal.ai to get a daily summary of top models, repos, and papers in AI. Read by 280,000+ devs.

Questions?

Q: Is Ruflo safe to install? A: v3.6.x patched the obfuscated preinstall and SQL injection flagged in issue #1375 from March 2026. Running ruflo verify after install confirms the bytes match the published manifest.

Q: Does Ruflo really hit 84.8% on SWE-bench? A: No. The number comes from simulate_benchmarks.py in the repo, which generates results with random.uniform(-0.05, 0.05) added to hardcoded base rates. Ruflo does not appear on the official SWE-bench leaderboard.

Q: What part of Ruflo actually works? A: Memory, embeddings, task, claims, daa, workflow scheduling, and most swarm and agent registry tools. The May 3 self-audit (ADR-093) verified ~195 of ~240 tools real. The execution-layer gaps are agent_spawn (no subprocess), hive-mind (single-process), workflow_execute (no runtime), and WASM agent (echo only).

Q: Should Ruflo replace CrewAI or AutoGen? A: Not for swarm coordination. As a Claude-Code-native memory, embeddings, task, and claims layer, yes.